The Promise and Potential of AI Infrastructure

Parallels to Cloud, the Emergence of the AI Engineer, and the Outflows of a Multi-Model World

If there’s anything this past weekend has shown, with the OpenAI saga effectively occupying the mental real estate of everyone in the tech ecosystem, it’s that generative AI has officially cemented its place as the center of gravity of technology, and potentially the economy at large. One year ago, it was akin to a toy— ChatGPT being an elegant product that captured the imagination of the mainstream consciousness and opened our eyes to the vast potential of large language models. Almost a year later, we’re all grappling with how to make room for and best harness this radically consequential technology, indicative of a real paradigm shift with the potential to create trillions of dollars in market cap.

Here’s how I’m thinking about the AI landscape and where things go from here.

It’s known that generative AI is a real technological shift. Every massive technological shift introduces a massive amount of entropy to the system. While the shift itself is massively additive to progress as a whole, it changes the state of the system— taking it from one of stability and equilibrium to one of chaos. Akin to the addition of a new keystone piece to a complex puzzle, it forces a reconfiguration of existing parts and the overall structure at large. All of a sudden, everyone is trying to change and adapt to the new landscape, evolving their workflows and tech stacks in a way that squeezes the maximal lemonade out of the lemon of technology that’s emergent. And they have to— if they don’t, someone else in their market will, and letting that happen is ultimately the death sentence for a company.

Because the step-function shift creates massive amounts of volatility and chaos, there always exists a set of companies that populate various niches with the function of bringing order to that chaos, in whatever domain they’re playing in. Creating the conditions, in the form of products and services, to enable all the possible lemonade to be squeezed out of the lemon. Take the shift to the cloud, for example. The inception of cloud computing was a fundamentally revolutionary one, enabling enterprises to run their workloads on cloud servers and pay for compute as they go (minimizing the activation energy needed to get started), instead of the incumbent model requiring the purchase of monolithic on-premise server racks. But while AWS released EC2 and S3 in 2006, unlocking the full potential of any new technology goes far beyond the ingenuity of any one business.

AWS’s rise was great, but that still left unanswered questions on how enterprises could actually fully operate and build in the cloud. How do you make the most of your data in the cloud? How do you monitor your applications in the cloud? How do you ensure security? Enter a number of foundational cloud companies, often categorized as cloud infrastructure, which answered these questions with stellar products.

Companies like MongoDB and Snowflake answered the data question, both in terms of how to store a large amount of data, often coming in a number of forms and structures, and how to run analytical queries on that data in a fast and seamless way. Companies like AppDynamics, New Relic, and Datadog came in to answer the observability question: while they initially started in niche areas of the market (New Relic aimed at application monitoring for SMBs while AppD started off with an enterprise focus, Datadog’s flagship product was focused in infrastructure monitoring), the three eventually converged on similar themes associated with giving developers and builders the highest quality of visibility and legibility into their applications. In cybersecurity, Crowdstrike came to market with Falcon, a SaaS platform for endpoint protection, detection, and response; being built for the cloud enabled them to serve customers in ways that incumbents like McAfee couldn’t (CrowdStrike founder George Kurtz was actually the CTO of McAfee before starting CrowdStrike).

The beauty of great infrastructure companies is that they have fantastic business genetics; being enabling agents of success for enterprises at an application layer, they’re positioned in such a way that consumption of their product is aligned with increased growth for their customers. Especially when a product inserts itself as a center of gravity within an enterprise and the switching costs begin to mount up, compounding revenue within a customer becomes the default state of revenue evolution. It’s why the net dollar retention (NDR) of cloud infrastructure companies are top-tier: Snowflake with a $142% NDR, Confluent with a 130% NDR, HashiCorp and GitLab both with a 124% NDR, and MongoDB and Datadog both doing 120% in NDR. The properties of these products naturally beget expanding and growing revenue within customers as they continue to grow, given a consumption-based pricing model.

It goes without saying that these cloud infrastructure companies have grown into massively successful businesses; the net dollar retention metrics paint just one dimension of the story. Looking at a select cohort of top-tier public enterprise software companies, infrastructure software businesses have outperformed application software businesses in total combined market cap ($11.9B vs $4.5B), rule of 40 (44% vs 32%), and multiple of return since IPO. This fantastic report from Redpoint provides more color on the relative success of cloud infrastructure companies.

Will AI Infrastructure Resemble Cloud Infrastructure?

The question then becomes, will the AI landscape manifest in a similar structure? Will the ecosystem of AI companies self-organize in a similar way, where infrastructure companies rise to a similar dominance? First and foremost, it’s worth examining what the AI stack fundamentally looks like right now and which areas seem the most exciting.

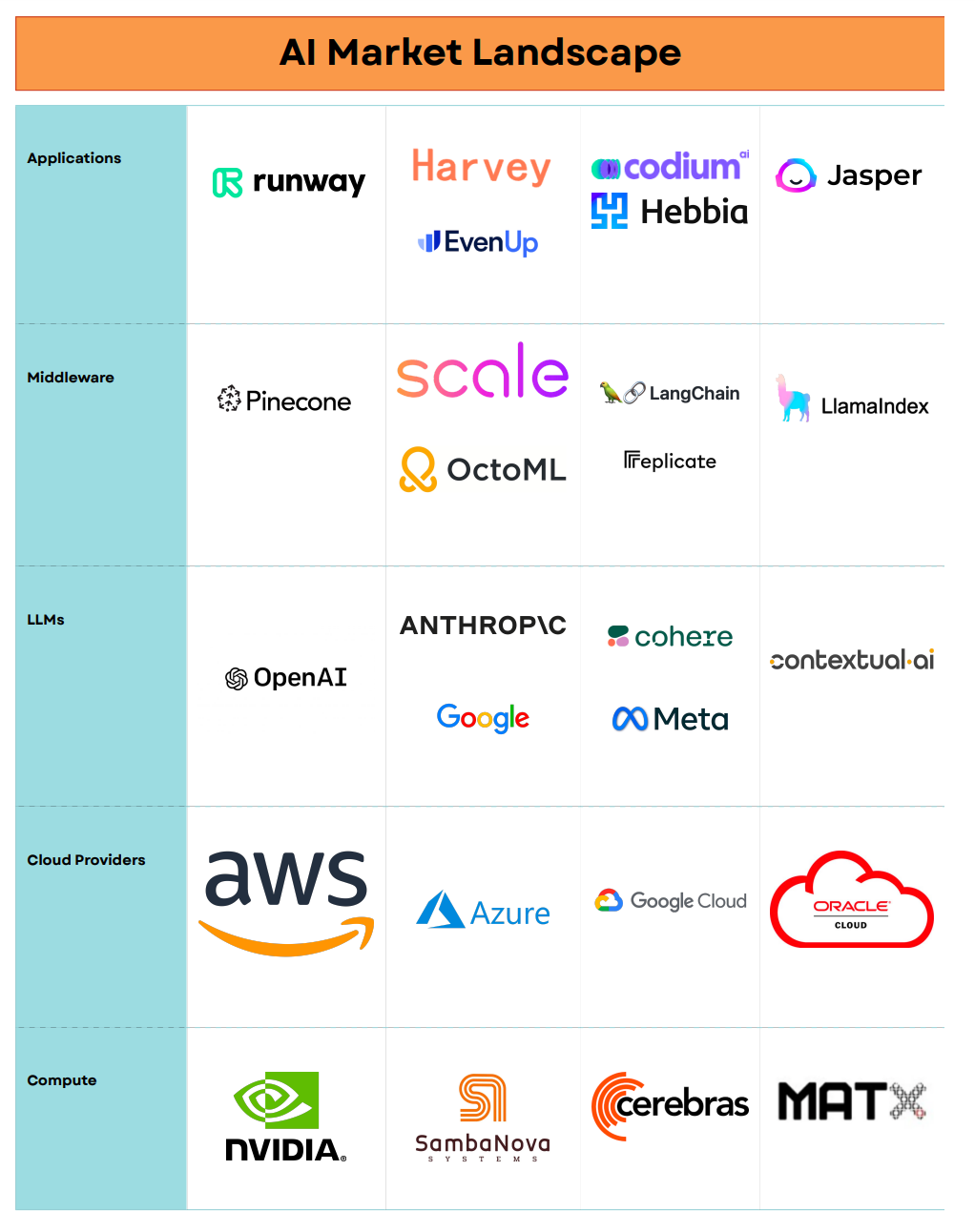

The market map I made (below) is a non-exhaustive look at how the AI stack is shaping up at the moment.

Much ink has been spilled about how companies at the compute layer are winning from this generative AI paradigm shift— NVIDIA has experienced unprecedented growth as the de facto supplier of GPUs for LLM applications, while the big cloud infrastructure providers like AWS, Azure (critical partnership with OpenAI), GCP, and OCI have been huge beneficiaries as well (AWS is still growing 12% at a $92B revenue run rate). On the semiconductor side of things, upstarts like SambaNova (Mayfield backed) and Cerebras (Benchmark backed) are challenging NVIDIA with chips idiosyncratically oriented to handle AI workloads. Another fascinating company I’m keeping my eyes on is MatX— started by Reiner Pope (helped build Google PaLM and wrote Google’s fastest inference software) and Mike Gunter (helped build Google’s TPUs), their goal is to build faster chips for LLMs, and they’ve raised for notable investors including SV Angel, Homebrew, Outset Capital, Swyx (host of the Latent.Space podcast), and Rajiv Khemani (founder of Cavium, Innovium, and now Auradine).

At the foundational model layer, OpenAI is leading the way, having recently hit a $1.3B ARR with their GPT-4 model (and now GPT-4 Turbo) pushing the state of the art of the industry. Not too far behind is Anthropic, building Claude. Cohere, started by Aidan Gomez (one of the authors of the seminal Transformers paper), is building Command, an LLM geared towards enterprise use-cases and fine-tuning. Contextual AI, started by Stanford professor Douwe Kiela, is building LLMs for large-scale enterprises with RAG (retrieval augmented generation) baked into the model itself to minimize hallucinations. Google is in the mix as always, with PaLM (the LLM behind Bard) and the upcoming release of Gemini. The emergence of open-source models have also been interesting and may shift the dynamics of the space; Meta namely has been active in this space with Llama 2.

Middleware, a layer of the AI stack that will be covered in more detail later in this piece, is what I think of as the emergent AI infrastructure set: it spans companies that enable people to turn the potential energy of LLM use-cases kinetic. These include developer frameworks like LangChain and LlamaIndex that let developers connect their own data to LLMs and build context-aware applications and agents, vector databases like Pinecone and Chroma that let developers store their data in the form of vector embeddings and build retrieval mechanisms to make their models smarter and less likely to hallucinate, and data labeling & quality companies like Galileo, Scale, and Snorkel that expand the set of usable data and are really pushing forward the notion of data-centric AI. Compute optimization companies like Replicate and OctoML have also emerged to abstract away the complexities of hosting ML models.

Last but not least, applications are what the end customer interfaces with, and what’s enabled by all the products on the lower levels of the stack. ChatGPT may be the canonical example of an AI consumer application, but other examples include Harvey and EvenUp for legal applications, Codium for code generation, Hebbia for knowledge work and productivity, Runway for media, and Jasper for content. The key thing to note about the application layer is that incumbents are just as capable of building applications, and their products may in fact be preferential because they own the data, distribution, business model, and user experience. GitHub copilot is a picture-perfect example of this— they’re already doing $120M ARR.

There are two key themes that I’m super excited for on the layer of AI infrastructure that I think will drive massive economic value in this AI wave: every engineer becoming an AI engineer and the outflows of a multi-model world with an active open-source community.

Every Engineer Will Be an AI Engineer

A theme that is universal in the evolution of any given technology paradigm is the gradual collapse of barriers to entry for building on any such paradigm, and hence the democratization of applications overlaying it. Machine learning seems to follow this trend— while it was previously “ML wizards” with a background in academia and extensive research experience building in the space, this last year has seen a massive explosion in the general developer activity on top of large language models.

This has been powered by a set of interesting middleware companies. LangChain and LlamaIndex immediately come to mind as wonderful examples of such developer frameworks and products. With LangChain, the critical insight Harrison Chase had when starting the company was recognizing and factoring out a key set of building blocks developers were using when building AI applications: these include prompt templates, output parsers, document loaders, text splitters, and retrievers among other things. The combination of these building blocks, along with large language models (of course), adds up to a chain that can be operationalized as AI applications and agents. LlamaIndex isn’t too dissimilar: as a data framework for LLM applications, they focus on providing data ingestion (including unstructured data like PDFs and video), data indexing, and a query interface to help developers build end-user applications such as document Q&A, data-augmented chatbots, knowledge agents, and structured analytics. Other companies are actively innovating in this space as well, including LastMile, Respell, Stack AI, Baseplate, Graphlit, and Psychic.

On the data side, what makes content accessible and useful for these language models, especially in retrieval settings, is converting them into vector embeddings: a mathematical representation of unstructured data, encapsulating their semantic meaning into an n-dimensional array of floating point numbers. Common embedding models and approaches that convert text to embeddings are word2vec, Google’s BERT model, and OpenAI’s embedding model. Voyage AI, a new company started by Stanford professor Tengyu Ma, has also emerged to build state-of-the-art embedding models, customized to the customer and their domain. These vector embeddings are stored in vector databases— upstart players Pinecone, Chroma, and Weaviate are building brand new products to occupy this niche, while MongoDB’s vector DB product has been gaining traction as well. Collectively, this has added up into a new paradigm called retrieval augmented generation (RAG): harnessing the embeddings stored as context for the LLM, hence augmenting it with knowledge and reducing the likelihood of hallucinations.

For those who are closely following the space, there is an elephant in the room that needs to be addressed: OpenAI. At their DevDay earlier in the month, OpenAI released their Assistants API, which lets developers build applications inside their own applications. This encompasses a code interpreter (allows API to write and run Python code in a sandboxed environment), function calling, and knowledge retrieval (can upload a file to the API, where OpenAI will automatically chunk the documents, store the embeddings, and implement vector search for retrieval to answer user queries). It’s the knowledge retrieval capability that’s the most similar to other companies mentioned above— and hence most threatening to their survival.

There are many schools of thought on this. One perspective is that end-to-end platforms that preserve simplicity ultimately win at the end of the day: this was the insight that guided Steve Jobs in building Apple’s closed ecosystem, and is echoed in how Parker Conrad is building Rippling. The counterpoint that must be held simultaneously, however, is that competition is a force for innovation in markets, and the real kingmaker who determines success in this domain is the aggregate product adoption and what developers (and enterprises) like the best. Especially given the chaos that has played out with OpenAI and the unknowability of their future proximity to compete along these vectors, it’s likely that existing middleware players will be able to maintain their advantaged position if their pace of shipping velocity persists. It’s worth adding that a similar competitive trend played out in the cloud— AWS built competitive features to almost all of the niches of cloud infrastructure that popped up (notably, Redshift to compete with Snowflake), and yet many of the cloud infrastructure players (mentioned above) still ended up victorious. Speciation is a property of the startup ecosystem, and the focus embedded in the specialization of a niche can often triumph against a generalist that is spread fairly thin. OpenAI DevDay served as a crucible moment for many middleware startups, but the ones who are antifragile will continue to push the boundaries of how they serve developers en route to building healthy enterprise software businesses.

I view the hallmark of every great enterprise infrastructure software company as delivering leverage: abstracting away complexity such that builders can spend their time on what gets them up in the morning, hence enabling creativity that couldn’t exist counterfactually. In the context of AI, these products expand the surface area of what developers can work on because they introduce LLMs, a critical accelerant, to the fold and let them ask the question of “how could these language models enable me to radically rethink and reinvent what could be built here?”

The other class of companies enabling AI creativity from non-ML practitioners is in a category I think of as compute optimization. Underlying this is a directional arrow of progress in virtualization and portability: companies like VMWare and XenSource virtualized servers in the mid-2000s, and Google (Kubernetes) and Docker did the same at the application layer with containerization in the 2010s. How does this manifest in the age of generative AI? One can’t move up the stack from applications, but the current AI wave has led to overwhelming demand lower in the stack with GPUs, and hence has ushered in the trend of GPU portability. This begets a new set of companies building platforms to abstract away the complexities of hosting ML models while letting developers scale GPU usage up and down based on demand.

Replicate is top of mind as one such company— coincidentally, their co-founder, Ben Firshman, led open-source product efforts at Docker. This open-source vein runs through Replicate as well, as the company is commercializing Cog, an open-source tool that lets developers package ML models into a production-ready container format. Another company in this realm with open-source roots is OctoML, productizing Apache TVM— an open-source ML compiler framework helping developers optimize their models for specific hardware. A key hallmark of OctoML’s feature set is the ability to transform ML models into a portable software function that can be interacted with via an API; this radically increases the ease of integration with existing workflows. I’m also bullish on Modal Labs: their platform lets developers and enterprises run code in the cloud without needing to configure the necessary infrastructure, and hence only pay for what they use. Customers of Modal include Ramp and Substack, indicative of the promise in enterprise adoption of these products. Other companies riding this wave include Modular, Baseten, and RunPod.

Outflows of a Multi-Model World

If there’s anything that this weekend showed us, it’s that companies that appear indomitable are often far from. Prior to this past weekend, it could’ve been intellectually comfortable to assume the manifestation of a world where GPT-4 Turbo was the dominant, de facto LLM— many technology markets tend to be winner-take-all (just look back to the search wars), and OpenAI was surely ahead of the pack. But the certainty of those conclusions dissipated over the last few days, especially as customers realized the risks of high dependency on a single model provider.

Who are the alternatives? Beyond Anthropic and Google in the mix for building competitive base foundational models and Cohere and Contextual building enterprise-specific solutions, it’s worth taking a look at open-source as well. From Facebook’s Llama-2 to Databrick’s Dolly 2.0 and MosiacML’s MBT-7B, there has been a healthy amount of activity in the world of open-source LLMs.

The question of whether closed or open source models will win a false dichotomy; much like biological speciation, both serve different niches. Open-source models can be cheaper (Vicuna-13b was an open-source chatbot trained on Llama that attained 90% of the quality for only $300), more controllable (developers can adjust the weights and parameters of the model to optimize for their idiosyncratic use case), and more transparent (less of a black box) than closed source models. The coexistence of open and closed source models can manifest in enterprises using closed-source models out of the box for more general use-cases, while customizing and deploying a panoply of smaller open-source models for more specific and niche problems. All the middleware companies mentioned above enable developers to mix and match models as they wish, hence they benefit from the tailwinds of a multi-model world.

Ultimately, it is far from known how the entire AI landscape will shake up and on what vectors enterprises will adopt these nascent technologies. What is known, however, from looking at developer activity over the past many months, is that generative AI is here to stay. While OpenAI is a keystone agent in the ecosystem that we’re all beneficiaries of, a theme that has echoed throughout this piece is that unlocking the full potential of any given technology goes beyond the ingenuity of any one business. If there’s one parallel to the cloud that this past weekend reiterated, it’s that generative AI will not come down to one company, and has the room to support multiple winners. I continue to be fascinated by the tools that are democratizing creation— turning every engineer into an AI engineer and expanding the scope of problems they can tackle and applications they can build. I look forward to seeing the $10b+ companies that are built here, and more importantly the way they enable AI applications to change the way we work, live, and play!